So, last Sunday, Daylight Saving Time ended, time to reset the clocks. Folks in the states do it this weekend, but well… this post ain’t about time. But when the clocks are reset anyway, it might be the time to test the Residual Current Device.

I have a bunch of Single Board Computers running, a Raspberry Pi 1, 3, 4, an Odroid U3, a Pine64. Need to shut them down before yanking out the power, and when shutting them down, it is also a good time to make sure all updates are installed before doing so, right?

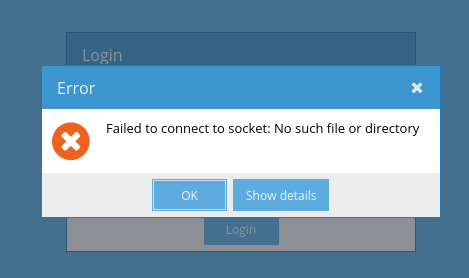

Well… while installing updates on the Odroid U3, I noticed something strange:

error: command terminated by signal 4: Illegal instruction

After some googling around I found something that looks like this issue. It seems the C library the updates installed require a newer kernel that I was currently running. The new kernel might be a dependency of the C library, and it might have been installed, but a new kernel doesn’t become active until a reboot.

So, after rebooting the system, it booted fine. However, since the previous attempt to install updated included those signal 4 messages, it might been wise to reinstall all packages.

# pacman -Qqn | pacman -S -

While reinstalling everything, it prompted me to update the bootloader. And I said yes… well…. shouldn’t have, that would have saved me some time… I guess you know the result, the system didn’t boot. Somehow the new bootloader changed the boot configuration. I would have guessed any U-Boot to load the boot.scr file and just boot from that. But someone the device IDs were all wrong, trying to boot from mmc 0, while my SD card was mmc 1. Ended up tinkering with the boot scripts, first fixing the mmc to 1, and then, rather ugly, setting the root partition to /dev/mmcblk0p1, rather then using the UUIDs. For some reason U-boot didn’t read and/or pass the UUID correctly. I might be doing a complete reinstall and fix the boot configuration of my Odroid U3, but it has always been a mess, and when updating the mess, it becomes even messier.

But after that excursion to U-boot, the system booted, and was up and running again, or at least, I thought so.

Later that Sunday, I was about to prepare the playlist for my radio show, which I do on Tuesdays. Yeah, I picked up my old hobby of hosting radio shows again. Radio BlaatSchaap has risen from the digital ashes when covid hit the world. But well, where was I? So, I was going to prepare some music, but then… I discovered I couldn’t access the music. Now, the music is stored on an USB hard disk connected to the Odroid, and is accessed over the network. So… what the ****? The system was up again, wasn’t it?

Turned out… the system entered a faulty state:

[ 31.474178] blk_update_request: I/O error, dev mmcblk0, sector 137032 op 0x1:(WRITE) flags 0x800 phys_seg 1 prio class 0

[ 31.485018] Buffer I/O error on dev mmcblk0p2, logical block 233, lost async page write

[ 34.475437] s3c-sdhci 12530000.sdhci: Card stuck in wrong state! card_busy_detect status: 0xf00

[ 34.478494] mmcblk0: recovery failed!

The SD card was misbehaving. Why was this? I’ve recently updated the kernel, but this is unlikely to be a kernel issue, as the system was running on this kernel earlier that day. Inserting the SD card in my laptop gave similar errors.

[25440.796103] blk_update_request: I/O error, dev mmcblk0, sector 137144 op 0x1:(WRITE) flags 0x800 phys_seg 1 prio class 0

[25440.796112] Buffer I/O error on dev mmcblk0p2, logical block 247, lost async page write

[25443.799351] sdhci-pci 0000:24:00.1: Card stuck in wrong state! card_busy_detect status: 0xf00

[25443.799358] mmcblk0: recovery failed!

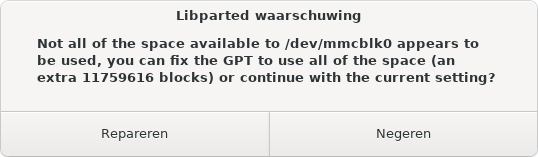

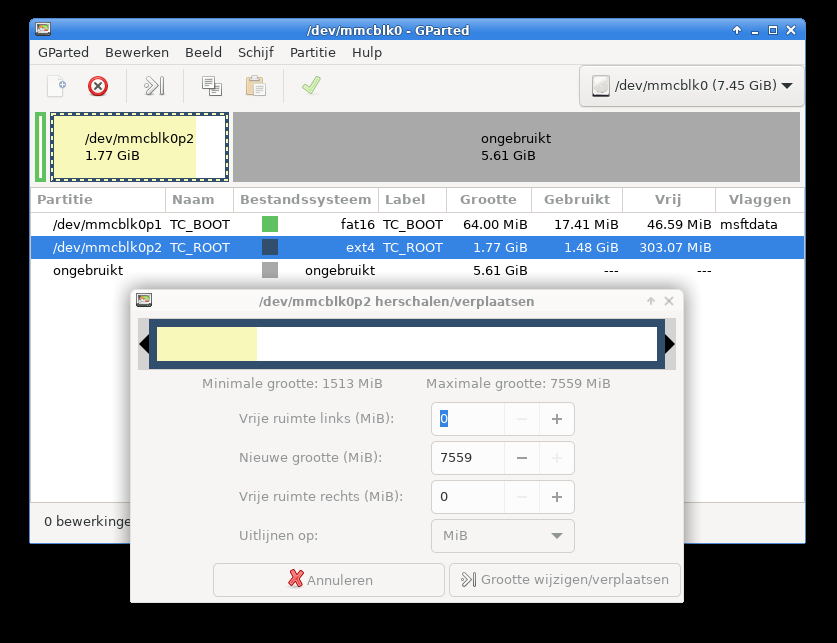

Further testing turned out reading succeeds, but writing fails. My best guess, the SD card is worn out. The reinstalling of all packages caused many writes, and a few hours later, it was one write too many.

I’ve created an image of the SD card, and fsck’ed it. The file system was in a good condition. Therefore I wrote the image to a new SD card, inserted it in the Odroid, and it was up and running again.

One curious fact: when inserting the SD card in an USB SD reader, rather then in the native SD slot in my laptop, I am able to write to the card. Possibly the cheap Chinese SD reader fails to implement error checking?

But for a few tasks I might require a Microsoft Windows installation. For this purpose I have downloaded the ISO for Microsoft Windows 10 21H1. To create a bootable medium out of this, it is some hassle, as to boot from UEFI it requires the large images to be split. But that’s something I might discuss another time, but this is explained out there.

But for a few tasks I might require a Microsoft Windows installation. For this purpose I have downloaded the ISO for Microsoft Windows 10 21H1. To create a bootable medium out of this, it is some hassle, as to boot from UEFI it requires the large images to be split. But that’s something I might discuss another time, but this is explained out there. Therefore, the code now sits on my

Therefore, the code now sits on my